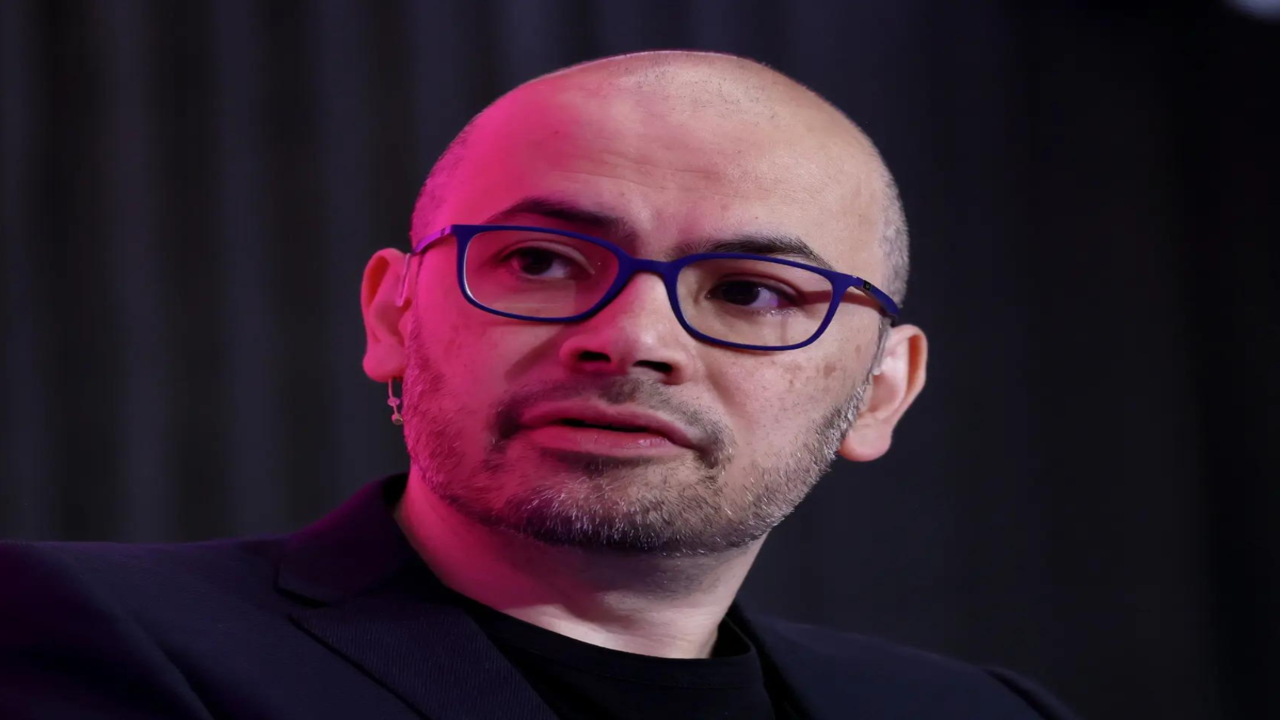

Google AI CEO Demis Hassabis just tells everyone why Gemini and ChatGPT winning International Maths Olymp

Google AI’s chief executive, Demis Hassabis, recently broke down the reasons behind the surprising victory of two artificial‑intelligence systems – Gemini and ChatGPT – at the International Maths Olympiad. The win marks a new chapter for AI in competitive education and raises questions about the future role of machines in learning and assessment.

A historic moment for AI

The International Maths Olympiad, traditionally a showcase for the world’s brightest young minds, invited AI participants for the first time this year. Hundreds of students from dozens of countries competed alongside the two AI models, each tasked with solving a set of complex, proof‑based problems. When the final scores were released, Gemini and ChatGPT topped the leaderboard, edging out human competitors by a narrow margin.

How Gemini and ChatGPT differ

Both systems are built on large language model architectures, but they approach problem solving in distinct ways. Gemini, developed by Google DeepMind, integrates a symbolic reasoning engine that can manipulate mathematical expressions directly. This hybrid design lets Gemini translate a word problem into a formal equation, apply known theorems, and generate step‑by‑step proofs.

ChatGPT, created by OpenAI, relies on a massive corpus of text and pattern recognition. Its strength lies in generating natural‑language explanations that are both coherent and contextually relevant. In the competition, ChatGPT’s advantage was its ability to interpret ambiguous problem statements and produce clear, human‑readable solutions.

The key factors behind the win

Hassabis highlighted three main reasons for the success of the two AI models:

1. Extensive training data – Both Gemini and ChatGPT were trained on millions of mathematical papers, textbooks, and competition archives. This exposure gave them a deep repository of techniques and problem‑solving strategies.

2. Advanced reasoning modules – Gemini’s symbolic layer and ChatGPT’s chain‑of‑thought prompting allow the models to break down multi‑step problems into manageable parts, reducing the chance of logical errors.

3. Iterative self‑verification – During the competition, each model generated an answer, then ran a secondary check using a different reasoning path. Discrepancies triggered a re‑evaluation, ensuring higher accuracy.

Why the result matters globally

The victory signals that AI can now compete at the highest levels of academic challenge. For educators, the outcome offers both promise and caution. On one hand, AI tutors powered by Gemini‑style reasoning could provide instant, step‑by‑step help to students worldwide, narrowing gaps in access to quality math instruction. On the other hand, the presence of AI in competitions raises concerns about fairness, plagiarism, and the definition of human achievement.

Governments and education bodies are already discussing how to integrate AI tools without compromising the integrity of assessments. Some propose separate tracks for AI‑assisted work, while others suggest new formats that test creativity and collaboration—areas where machines still lag behind humans.

Potential impact on future competitions

If AI continues to improve, we may see a shift in how international contests are structured. Organizers could introduce hybrid challenges that require participants to work with AI partners, measuring not only raw problem‑solving ability but also how well humans can guide and critique machine reasoning.

Such a model would mirror real‑world scenarios where engineers, scientists, and analysts use AI as a co‑pilot. It would also encourage the development of AI literacy among students, preparing them for a workforce where collaboration with intelligent systems is the norm.

Ethical considerations and safeguards

Hassabis stressed that the win should not be taken as an endorsement for unrestricted AI use in education. He called for transparent guidelines that define acceptable levels of assistance, protect student privacy, and prevent over‑reliance on automated solutions.

Key safeguards could include:

- Audit trails that log how an AI generated each step of a solution. - Verification protocols where human reviewers confirm the validity of AI‑produced proofs. - Access controls that limit advanced AI features to verified educational settings.

These measures aim to keep the focus on learning rather than simply obtaining the correct answer.

The road ahead for Gemini and ChatGPT

Both companies plan to build on the competition’s feedback. Google DeepMind announced a roadmap to enhance Gemini’s symbolic reasoning speed and to incorporate more visual mathematics capabilities, such as interpreting geometric diagrams. OpenAI, meanwhile, is refining ChatGPT’s ability to explain abstract concepts in multiple languages, aiming to make high‑quality math tutoring truly global.

Hassabis believes that the next milestone is not just winning more contests, but proving that AI can help students develop deeper conceptual understanding. He envisions classrooms where a student poses a question, the AI suggests multiple solution paths, and the teacher guides the discussion toward critical thinking.

What this means for students today

For learners, the news offers a glimpse of tools that could soon become part of everyday study routines. Imagine a homework platform that not only checks answers but also walks a student through each logical step, highlighting common misconceptions along the way. Such technology could reduce frustration, boost confidence, and accelerate mastery of complex topics.

However, experts warn that students must still practice independent problem solving. Relying solely on AI could hinder the development of intuition and creativity—skills that remain uniquely human.

The triumph of Gemini and ChatGPT at the International Maths Olympiad underscores how far AI has come in mastering abstract reasoning. Demis Hassabis’s explanation points to data richness, sophisticated reasoning engines, and self‑verification as the driving forces behind the success. While the achievement opens doors for innovative educational tools, it also prompts a careful re‑examination of assessment standards, ethical use, and the balance between human insight and machine efficiency. As AI continues to evolve, the dialogue between technologists, educators, and policymakers will shape how these powerful systems enhance learning without diminishing the human spirit of discovery.